AI Essentials for Investigative Intelligence | 6-Part Video Series

Investigative intelligence teams face a widening gap between the volume of language-heavy data they collect and their capacity to make sense of it. Calls, messages, reports, surveillance outputs, and online content pour in faster than ever, yet the work of reviewing them often still falls to analysts and translators reading line by line.

In Part 2 of our AI Essentials for Investigative Intelligence series, we focus on language analysis in investigations and where the AI sub-field, natural language processing (NLP) can realistically help accelerate investigative intelligence workflows, without replacing human judgment or introducing unnecessary risk.

Hi, I’m Sean, and I’m a Senior AI Product Manager at JSI. Thanks for joining us for Part 2 of this video series, titled AI Essentials for Investigative Intelligence, where we break down the core AI capabilities that matter for investigative work, show where they truly add value, and give you a clear understanding of the considerations that should guide every deployment. This episode, in particular, is going to cover natural language processing, or NLP.

Before we get into it, let’s quickly go over what we’ve covered in the series so far, and where we’re headed next. In Part 1, we talked about the data problem that law enforcement and intelligence teams are up against, and where AI fits into it all. If you haven’t watched that video yet, I encourage you to start there, because it really sets the stage for what’s to come in the later videos. In this part, we’ll start with the first AI subfield: natural language processing, explaining what it is and how it can be used to sift through massive amounts of data and pull out pertinent intelligence. Then we’ll move on to other subfields like computer vision and generative AI. We’ll also discuss how these AI capabilities come together into emerging AI systems that help perform real-world investigative workflows. And finally, we’ll end with the compliance and ethics of AI in investigative intelligence.

So now, let’s start by defining what natural language processing actually is. When we talk about natural language processing (NLP), we’re really talking about a subfield of AI designed to help computers work with human language, not in a keyword-matching way, but in a way that helps them understand structure, meaning, nuance, and intent. NLP helps break language apart in much the same way humans naturally do: it analyzes grammar, interprets phrases, and makes sense of context so a system can understand what someone is trying to communicate. At its core, NLP is the bridge that lets machines understand human communication in a more human way.

Where NLP becomes truly valuable for law enforcement and intelligence work is in the types of data you deal with every single day. For telephony, for example, NLP, and specifically speech analytics technologies, can transcribe and translate conversations and surface meaning or intent without requiring teams to listen to every minute of audio. In messaging data, it can quickly make sense of text conversations, slang, or even coded exchanges that may otherwise take a lot of time to interpret.

For surveillance audio, NLP and speech technologies can summarize long recordings and highlight key segments so investigators can zero in on what matters most. With things like body packs, NLP can help extract relevant dialogue from busy or noisy environments, easing the burden of reviewing long, raw recordings. And with online activity, whether it’s forums, chats, or social platforms, NLP makes language searchable and easier to analyze at scale. All of these sources produce huge volumes of human communication, and NLP helps efficiently convert that noise into intelligence that investigators can actually act on.

Let’s get more specific with some concrete examples of how this technology can be used within your workflows. When we talk about natural language processing and the types of data you’re collecting, a big part of that data is human speech. That might come from recordings or videos where people are talking with one another, not through a text medium, but by communicating with their voices. As a key part of an early NLP pipeline, you might need to incorporate tools like automatic speech recognition, which can transcribe spoken words into text that can later be analyzed by other NLP tools. Typically, this means you’ll have generated transcripts that include the spoken words along with timestamps showing when those words were spoken.

We can even layer on additional capabilities like diarization to separate unique speakers, and speaker identification to figure out who is saying what. Of course, there are challenges associated with this type of technology as well. With the current state of the art, things like silence, background speech, and music can create complications that produce less-than-desirable outputs. Dialects across different languages, or blended languages within the same stretch of speech, can also create complications, especially if the model hasn’t been trained sufficiently on the source language being transcribed. And because this is the first step in a more holistic pipeline that analyzes human communication, any problem in the transcript generated upfront can have downstream impacts on every other process you run. That’s why understanding confidence scores, and the overall accuracy of the transcript, is a critical first step, especially when you add capabilities such as translation.

With automatic speech recognition, you’re transcribing spoken language into text. But if someone is speaking in a different language, you may also want to translate that content. A lot of research has gone into this space over the last several decades, and there are tools like Google Translate, among many others, that can do this. One approach is called neural machine translation: it uses large sets of examples from one language to another to build models that can transform text from a source language into a target language. This can introduce challenges as well, depending on how the models are built. In some cases, the output can resemble a very literal word-for-word translation, which can lose nuance and meaning. Some large language models are getting better at this, but it’s still not a perfect situation. Critically, even if you have neural machine translation running within your investigative environment, any intelligence you identify that may be actionable (or help inform a decision) should still be reviewed by a human translator/interpreter to ensure the meaning is captured as intended.

As we continue to analyze data, we also have other tools in our toolbox, like sentiment or emotion analysis. This can be powerful for identifying overall sentiment, such as positive, negative, or neutral, in individual text messages or social media posts, or even conversations collected through different audio sources. More than anything, it can help you look at trends over time. For example, you might look at a Facebook group and track how sentiment changes; that might be a useful indicator to monitor as you assess potential future activity. So there are tools around sentiment and emotion analysis that can help categorize text in these ways.

Another powerful capability is text classification. As an investigator or an intelligence analyst, you might be looking for very specific types of data that fall into certain categories or topics. Text classification lets you define key topics or classes that are important to your investigation—whether that’s things like meetings, narcotics, weapons, or threats, for example.

It takes your input data, for example: “Hey buddy, if you ever come near my turf again, I’ll make sure you won’t be able to walk”, and scores it against the categories you care about to see how relevant each one is. In this example, the model identifies a strong possibility that there is a threat in the statement. I want to be very clear: these models are not perfect. False positives can occur, where a model tags a piece of data with a category that isn’t actually accurate, which is why human-in-the-loop workflows are important for validating and reviewing model outputs. For example, the model might analyze a statement like, “We’re going to destroy them tomorrow night at the game. Go team!”, and incorrectly flag it as a threat. In that case, we would consider it a false positive.

So when you evaluate these models for use in your operational environment, it’s important to benchmark them against real-world data, for example, data you know contains threats or weapon references, and validate what the model is producing. That kind of tool can run across large volumes of text and help you quickly identify content that includes threats, which may be a high-priority focus for you.

Another important tool in the NLP toolbox is named entity recognition. This becomes valuable when you’re working with large amounts of text that reference people, places, organizations, and other entities. Entities are the focal point of any investigation or analytical workflow in this space, so you want to be able to identify the specific entities referenced within the content.

As we see here, we’ve got Harry identified as a person, McDonald’s as a location, Plainfield St. as a location, and Bart as a person. But we also want to start establishing links between these entities. For example, we can see that the sender went to see Harry and Bart. From there, we can establish a relationship between the person who produced this content (or whoever sent the message) and those entities, because they clearly know who Harry is and who Bart is. Once we establish that link, we can do additional downstream processing on these extracted entities. For example, we found McDonald’s on Plainfield St.; we can pull that out and run it through a geocoding tool so we can tag location data onto this record as well. This further enriches the source data we’ve collected and makes it even more valuable from an analytical perspective.

Now, if we put all these examples together, we can build a workflow for a scenario-based investigation using the different NLP tools that are available. There are a few common data sources that investigators deal with every day, from text messages to emails to foreign-language audio files, for example. Let’s focus on the audio in particular: we’ll start with speech technology using automatic speech recognition, which detects the language and transcribes the conversation into text. Then we run additional enrichments downstream. For example, we translate the transcript into our operational language, often English, though in your case it may be different. Once we’ve translated and normalized that within our environment, we can run things like entity recognition to pull out key entities (like a BMW), and use text classification to detect signals such as a financial transaction.

Taken together, these enrichments help produce decision-ready insights from a single piece of content that you’ve ingested. And of course, you can scale this across millions of pieces of content you collect as part of your investigations, whether it’s transcribed audio, social media and OSINT activity, or other sources of information relevant to your case.

So that wraps up Part 2 on natural language processing. In the next video, Part 3 will shift gears toward computer vision. We’ll look at how AI can make sense of images and videos at scale, helping you spot patterns and evidence more quickly.

Then we’ll move to Part 4, which dives into generative AI, and Part 5, where we’ll focus on how these different AI capabilities come together into emerging AI systems for law enforcement and intelligence operations. Part 6 is where we’ll wrap up with a review of compliance and ethics as it pertains to artificial intelligence within investigative environments. So thank you again for watching. We hope to see you in the next part of the video series. And of course, if you want to learn more about JSI, please visit jsitelecom.com.

What You’ll Learn in Part 2

- What natural language processing is and how it analyzes structure, meaning, and intent across large volumes of language-based investigative data

- Where NLP delivers value in investigations, including AI-assisted analysis of calls, messages, reports, and online communications data

- Key limitations and risks of NLP, including transcription errors, translation inaccuracies, and downstream impacts of false positives

- How NLP enrichments can safely fit into an investigative intelligence workflow, supporting faster triage and more confident decision-making

What’s Ahead in This Series

This is part 2 of our 6-part series on AI Essentials for Investigative Intelligence. You can watch part 1 here: The Data Problem.

In the upcoming episodes, we’ll explore:

- Computer Vision: Using AI to analyze images and video at scale, surfacing patterns and evidence that are difficult to identify manually.

- Generative AI: What generative AI tools can (and can’t) do in investigative contexts, with a focus on practical value, limitations, and risk.

- Emerging AI Systems: How multiple AI capabilities integrate within real investigative workflows to deliver operational impact.

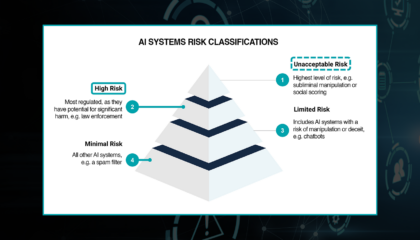

- Compliance and Ethics: Why responsible, transparent, and policy-aligned use of AI is mission-critical in high-risk environments.

Coming Next: Part 3 on Computer Vision.